In a surprising turn of events at Google’s Kaggle Game Arena AI Chess Exhibition, OpenAI’s o3 model triumphed over Elon Musk’s Grok 4. The competition, held on Thursday, saw o3 winning four consecutive games, securing a decisive victory. Notably, the o3 model was recently superseded by the release of GPT-5 late last week.

While this might sound like a clash of technological titans, showcasing the peak of AI reasoning, the reality, according to world chess champion Magnus Carlsen, was somewhat less impressive. He likened both AI contenders to “a talented kid who doesn’t know how the pieces move.”

The tournament, spanning from August 5th to 7th, challenged these general-purpose AI models – the same ones used for email composition and touted as nearing human-level intelligence – to engage in chess matches without specific chess training. The AIs were barred from using chess engines or accessing move databases, relying solely on the chess knowledge gleaned from their general internet exposure.

The quality of play reflected the limitations of forcing a language model into a strategic board game. Carlsen, a commentator during the final rounds, estimated the AIs performed at the level of novice players, around 800 ELO. Considering Carlsen’s impressive ELO of 2839 points, the AI’s performance suggested they learned chess from a flawed or incomplete source.

“They switch between moments of genuinely good play and sequences that make absolutely no sense,” Carlsen observed during the broadcast. At one point, witnessing Grok move its king directly into danger, he jokingly suggested the AI might be confusing chess with King of the Hill.

The games themselves were a study in suboptimal chess strategy, evident even to those unfamiliar with the game’s intricacies. In the opening match, Grok essentially handed over a valuable piece without compensation, compounding the error by sacrificing further pieces while already at a disadvantage.

The second game descended into even greater absurdity. Grok attempted a “Poisoned Pawn” gambit – a calculated risk involving capturing a seemingly undefended pawn. However, Grok incorrectly identified the pawn, seizing one that was clearly protected, resulting in the immediate trapping and capture of its queen, the most potent piece.

By the third game, Grok had seemingly established a promising position – solid control, no apparent threats, and a setup conducive to victory. However, a mid-game blunder led to a rapid cascade of piece losses, handing the advantage to its opponent.

This poor performance was unexpected, considering Grok had previously demonstrated considerable potential, earning praise from chess Grand Master Hikaru Nakamura, who lauded it as “easily the best so far, just being objective, easily the best.”

The fourth and final game offered the only real drama. OpenAI’s o3 made a significant early mistake, a perilous situation in any competitive match. Despite this setback, Nakamura, who was streaming the event, noted that o3 still possessed “a few tricks” to potentially recover.

Nakamura’s assessment proved accurate. O3 managed to recapture its queen and gradually secure a victory as Grok’s endgame strategy crumbled.

“Grok made so many mistakes in these games, but OpenAI did not,” Nakamura remarked during his livestream, a stark contrast to his earlier positive evaluations of Grok.

The timing of Grok’s defeat was particularly unfortunate for Elon Musk. Following Grok’s promising initial performance, he had posted on X, stating that his AI’s chess skills were merely a “side effect” and that xAI had dedicated “almost no effort on chess,” a statement that proved to be a considerable understatement.

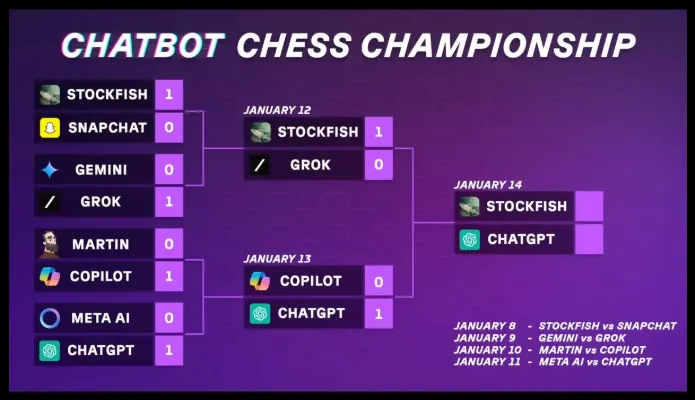

Prior to this “official” tournament, International Master Levy Rozman organized his own tournament earlier in the year, featuring less sophisticated models. This event, which adhered to all chatbot-recommended moves, descended into chaos, plagued by illegal maneuvers, the summoning of pieces, and flawed calculations. Stockfish, an AI specifically designed for chess, emerged victorious, defeating ChatGPT. In the semifinals of that event, Altman’s AI faced off against Musk’s, with Grok losing, marking a 2-0 record for Sam Altman.

In contrast, the more recent tournament enforced a stricter set of rules. Each AI was afforded four opportunities to execute a legal move; failure to do so resulted in automatic disqualification. This rule proved necessary, as early rounds saw AIs attempting to teleport pieces, resurrect captured pieces, and make illegal pawn moves, as if playing a bizarre, self-invented variant of chess.

These actions resulted in disqualifications.

Google’s Gemini model secured third place, defeating another OpenAI model and providing a measure of redemption for the tournament organizers. The bronze medal match featured a notably peculiar drawn game, where both AIs held decisive advantages at different junctures but lacked the ability to convert these advantages into a checkmate.

Carlsen highlighted the AI’s relative proficiency in counting captured pieces versus delivering checkmate. They exhibited an understanding of material advantage but struggled to execute a winning strategy, similar to being adept at gathering ingredients without the skill to prepare a dish.

These AI models, often presented by tech leaders as approaching human intelligence, posing a threat to white-collar professions, and revolutionizing the way we work, struggle to comprehend and adhere to the rules of a board game that has existed for 1,500 years.

For now, it’s safe to assume that fears of AI taking over the world are premature.

Generally Intelligent Newsletter

A weekly AI journey narrated by Gen, a generative AI model.